The FDA has already provided feedback to several pharmaceutical manufacturers asking for more consistent measurements of product characteristics, particularly the tests used by companies to assess the strength of their treatments.

Additionally, several developers have had to work with the FDA regarding the way they are comparing commercial-scale production to earlier clinical manufacturing.1 As a result, many companies are pushing back their timeline for submission.

The agency also announced plans to update their guidance, with strong indications that more changes are forthcoming.2 Current FDA recommendations consider the source material (cells and/or tissues) recovered from donors and how the CGT product will be manufactured (cell expansion in culture, viral reduction steps, formulation, etc.).

The submission process requires complete and accurate information regarding the facilities involved in both manufacturing and testing the drug that is the subject of the application.

However, consistent measurements for CGT products are particularly difficult because these therapies rely on biological material — such as patient cells or viruses — and, as such, scaling-up production can be complicated because each and every sample must be tested; this means that there’s a requirement for advanced methodologies for both data storage and analysis that are not typical of other biopharmaceutical processes (in which sampling of large production lots is the norm).

Marilyn Matz

Thus, tracking the detailed results for robustness and yield pose challenges in the manufacturing process. As a result, CGT developers are evaluating what this possible shift means for their drug submissions, as well as reviewing new tools to help satisfy these upcoming changes to FDA guidance.

Cell and gene therapy challenges

CGT holds great promise in terms of its potential to improve the lives of patients who have complex diseases with significant unmet needs. However, the field has been constrained by critical limitations in manufacturing technology, vector design capabilities and cost.

Ex vivo cell processing methods require optimisation and automation before they can be scaled. Other challenges include characterising complex materials, raw material and process variability, and limited knowledge of critical process parameters (CPPs) and their effect on the critical quality attributes (CQAs) of products.3

The field already employs advanced data processing routines for analytical techniques and developers assess the veracity of their data using statistical means.

However, the variety and volume of data in CGT manufacturing poses new and significant challenges because each sample connects directly to the recipient patient.

Insufficient sample sizes and a lack of granularity mean that traditional data analysis can produce results that lack the level of detail necessary to improve quality and identify unforeseen CQAs.

Zachary Pitluk

Current FDA guidance states that acceptance criteria should be established and justified according to data obtained from lots used in preclinical and/or clinical studies, data from lots used to demonstrate manufacturing consistency, data from stability studies and relevant development data.

For later-stage investigational studies in which the primary objective is to gather meaningful data about product efficacy, FDA recommends that acceptance criteria are tightened to ensure batches are well-defined and consistently manufactured. CGT manufacturers are looking to meet these expectations by using new tools to process unstructured data, thereby increasing efficiency and unlocking hidden value.

Extracting more value from datasets

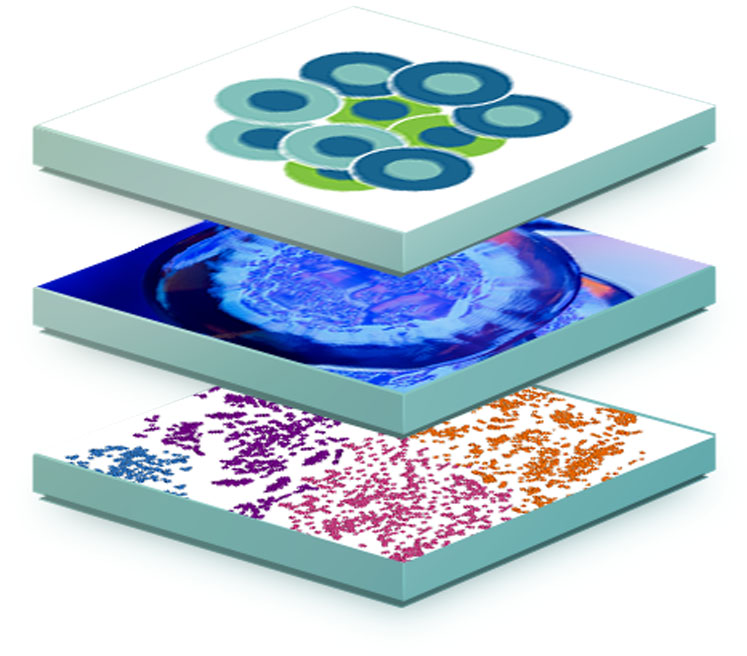

Multidimensional data has been leveraged successfully in a variety of applications in the chemical and biochemical industries, including for tasks such as bioprocess monitoring and the identification of CPPs, as well as the assessment of process variability and comparability during development, scale-up and technology transfer.

As a result, drug developers consider it to be a potential tool to tackle some of the challenges faced in the development of CGT manufacturing processes, as well as potential changes to FDA guidance.3

Like the entire life sciences field, the CGT discovery process has involved collecting and analysing larger and larger datasets, requiring IT and computing solutions that offer researchers flexible, scalable and easy-to-use tools to interrogate and make connections across massive heterogeneous datasets.

In addition to the scale of the data, its expanding variety adds to the complexity of these analyses. But these datasets are often too large and complex to be processed effectively by traditional database management systems.

Making connections

With evolving and expanding datasets, CGT manufacturers need to interactively synthesise information using an analytics platform that can scale and adapt affordably.

To meet this need, Paradigm4’s next-generation analytics platform, REVEAL: Analytical Development, was developed for the storage and interrogation of trillions of data points — so scientists using it can easily extract the quantities of data required by FDA and make connections that would not be possible with basic data storage/management systems.

Case study

A recent publication from Paradigm4 and researchers at Bristol Myers Squibb illustrates how rapid insights and understanding can be gained using a scalable platform — with integrated maths functions that can routinely work with billions of cells — designed for sparse data.4 The analytical database used in the study enabled the selection and analysis of cells in multiple studies.

Cells can be selected using individual metadata tags, more complex hierarchical ontology filtering and gene expression threshold ranges, including the coexpression of multiple genes. The tags on selected cells can be further evaluated to test biological hypotheses.

One such example includes identifying the most prevalent cell type annotation tag on returned cells. In this case, searches were completed in seconds, a time scale conducive to rapid iteration and timely decision making. The paper shows how the same approach has the potential to be used more broadly for many applications, including CGT products and manufacturing.

Looking ahead

In recent years, CGT has become a reality for the treatment of many devastating diseases. The industry must now address how gene therapies are designed, developed and produced by fully integrating advanced manufacturing technologies, computational tools and development capabilities.

New data analysis systems can harness the potential of multidimensional data, both to satisfy upcoming changes to FDA guidance, as well as to improve related manufacturing processes and drug testing.

Recent developments in data computing platforms can provide transformative improvements in cost, scale and efficiency that will help the gene therapy field to achieve its full potential across a range of therapeutic areas.

Companies that can integrate platform capabilities that deliver better treatments, lower costs and broader applications of the technology are going to drive that innovation, with the ultimate goal of expediting the delivery of safer therapies to patients.

References

- www.biopharmadive.com/news/fda-marks-gene-therapy-consistency/600445.

- www.fda.gov/vaccines-blood-biologics/biologics-guidances/cellular-gene-therapy-guidances.

- J. Emerson, B Kara and J. Glassey, “Multivariate Data Analysis in Cell Gene Therapy Manufacturing,” Biotechnol. Adv. (2020): doi: 10.1016/j.biotechadv.2020.107637.

- N. Kumar, et al., “Rapid Single Cell Evaluation of Human Disease and Disorder Targets Using REVEAL: SingleCell™,” BMC Genomics 22, 5 (2021): https://doi.org/10.1186/s12864-020-07300-8.